Definice

Inmachinelearning,randomforestisaclassifierthatcontainsmultipledecisiontrees,andtheoutputcategoriesareoutputbyindividualtreesDependingonthemodeofthecategory.LeoBreimanandAdeleCutlerdevelopedanalgorithmtodeducerandomforests.And"RandomForests"istheirtrademark.ThistermisderivedfromrandomdecisionforestsproposedbyTinKamHoofBellLabsin1995.ThismethodcombinesBreimans'"Bootstrapaggregating"ideaandHo's"randomsubspacemethod"tobuildasetofdecisiontrees.

Učební algoritmus

Postavte každý strom podle následujícího algoritmu:

UseNtorepresenttrainingThenumberofusecases(samples),Mrepresentsthenumberoffeatures.

Enterthenumberoffeaturesmtodeterminethedecisionresultofanodeonthedecisiontree;wheremshouldbemuchsmallerthanM.

FromNtrainingcases(samples)withreplacementsampling,takesamplesNtimestoformAtrainingset(iebootstrapsampling),anduseun-selectedusecases(samples)tomakepredictionstoevaluatetheerror.

Foreachnode,randomlyselectmfeatures,andthedecisionofeachnodeonthedecisiontreeisdeterminedbasedonthesefeatures.Accordingtothesemcharacteristics,calculatethebestsplitmethod.

Eachtreewillgrowcompletelywithoutpruning,whichmaybeusedafteranormaltree-likeclassifierisbuilt).

Výhody

Výhody náhodného zalesňování:

1) Pro mnoho druhů dat může generovat vysocepřesný klasifikátor;

2)Může pracovat s velkým počtem vstupních proměnných;

3)Itcanevaluatetheimportanceofvariableswhendeterminingthecategory;

4)Whenbuildingtheforest,itcanproduceanunbiasedestimateofthegeneralizederrorinternally;

5)Itcontainsagoodmethodtoestimatethemissingdata,andifthereisalargepartoftheIfthedataismissing,theaccuracycanstillbemaintained;

6)Itprovidesanexperimentalmethodtodetectvariableinteractions;

7) Pro nevyvážené klasifikační datové sady, může vyvážit chyby;

8)Itcalculatestheclosenessineachcase,whichisveryusefulfordatamining,detectingoutliersandvisualizingdata;

9)Usetheabove.Itcanbeextendedtounlabeleddata,whichusuallyusesunsupervisedclustering.Itcanalsodetectdeviatorsandwatchdata;

10) Proces učení je velmi rychlý.

Související pojmy

1.Split:Inthetrainingprocessofthedecisiontree,thetrainingdatasetneedstobesplitintotwosub-datasetsagainandagain.Thisprocessiscalledsplitting.

2.Features:Inaclassificationproblem,thedatainputintotheclassifieriscalledafeature.Taketheabovestockpriceforecastingproblemasanexample.Thecharacteristicisthetradingvolumeandclosingpriceofthepreviousday.

3.Featurestobeselected:Intheprocessofconstructingthedecisiontree,itisnecessarytoselectfeaturesfromallthefeaturesinacertainorder.Thefeaturestobeselectedarethesetoffeaturesthathavenotbeenselectedbeforethestep.Forexample,ifallthefeaturesareABCDE,inthefirststep,thecandidatefeatureisABCDE,andinthefirststep,Cisselected,theninthesecondstep,thecandidatefeatureisABDE.

4.Splitfeature:Thedefinitionofthereceptionselectionfeature.Eachselectedfeatureisthesplitfeature.Forexample,intheaboveexample,thefirstsplitfeatureisC.Becausetheseselectedfeaturesdividethedatasetintodisjointparts,theyarecalledsplitfeatures.

Konstrukce rozhodovacího stromu

Totalkaboutrandomforest,wemustfirsttalkaboutdecisiontrees.Decisiontreeisabasicclassifier,whichgenerallydividesfeaturesintotwocategories(decisiontreecanalsobeusedforregression,butthisarticlewillnotshowitforthetimebeing).Theconstructeddecisiontreehasatreestructure,whichcanbeconsideredasacollectionofif-thenrules.Themainadvantageisthatthemodelisreadableandtheclassificationspeedisfast.

Weusetheprocessofselectingquantitativetoolstovisualizetheconstructionofthedecisiontree.Supposewewanttochooseanexcellentquantitativetooltohelpusbetterstocks,howtochoose?

Thefirststep:seeifthedataprovidedbythetoolisverycomprehensive,don’tuseitifthedataisnotcomprehensive.

Krok 2: Zkontrolujte, zda se rozhraní API poskytované tímto nástrojem snadno používá. Pokud rozhraní API není dobré, nepoužívejte ho.

Step3:Checkwhetherthebacktestingprocessofthetoolisreliable,andthestrategiesthatarenotreliablebacktestingarenotused.

Step4:Checkwhetherthetoolsupportssimulatedtrading.Backtestingonlyallowsyoutojudgewhetherthestrategyisusefulinhistory.Atleastasimulateddiskisneededbeforetheformaloperation.

Tímto způsobem bude kvantitativní nástroj na trhu označen „zda jsou data komplexní“, „zda je API snadné použít“, zda je zpětné testování spolehlivé“ a „zda je podporováno simulované obchodování“, „použít“ a „nepoužívat“.

Theaboveistheconstructionofadecisiontree,andthelogiccanberepresentedinFigure1:

Na obrázku 1 jsou "data", "API" a "backtest" v zeleném barevném poli"Simulované obchodování" v tomto rozhodovacím stromě. Je-li pořadí funkcí odlišné, může být rozhodovací strom vytvořený ze stejné datové sady odlišně. Funkce objednávky je "data" "a"simulovaná funkce",""vybereme"data"a""""""""""""""""""" "API" a "backtest", pak je sestrojený rozhodovací strom zcela odlišný.

Itcanbeseenthatthemainjobofthedecisiontreeistoselectfeaturestodividethedataset,andfinallyputthedataontwodifferenttypesoflabels.Howtochoosethebestfeature?Alsousetheexampleofselectingquantizationtoolsabove:supposethereare100quantizationtoolsonthemarketasthetrainingdataset,andthesequantizationtoolshavebeenlabeled"available"and"unavailable".

Wefirsttriedtodividethedatasetintotwocategoriesby"IstheAPIeasytouse";wefoundthattheAPIsof90quantitativetoolsareeasytouse,andtheAPIsof10quantitativetoolsarenoteasytouse.Amongthe90quantitativetools,40arelabeledas"available"and50arelabeledas"unavailable".Then,theclassificationeffectofthe"APIiseasytouse"onthedataisnotEspeciallygood.Because,givenyouanewquantitativetool,evenifitsAPIiseasytouse,youstillcannotlabelitas"used"well.

Assumeagainthatthesame100quantitativetoolscanbedividedintotwocategoriesby"Doyousupportsimulatedtrading".Onecategoryhas40quantitativetooldata,andall40quantitativetoolssupportSimulatedtransactionswereeventuallylabeled"used".Anothercategoryhad60quantitativetools,noneofwhichsupportedsimulatedtransactions,andtheywereeventuallylabeled"notused".Ifanewquantitativetoolsupportssimulatedtrading,youcanjudgewhetherthequantitativetoolcanbeused.Webelievethattheclassificationofdataby"whetheritsupportssimulatedtrading"isveryeffective.

Inreal-worldapplications,datasetsoftenfailtoachievetheabove-mentionedclassificationeffectof"whethersimulatedtradingissupported".Soweusedifferentcriteriatomeasurethecontributionoffeatures.Threemainstreamcriteriaarelisted:ID3algorithm(proposedbyJ.RossQuinlanin1986)usesthefeaturewiththelargestinformationgain;C4.5algorithm(proposedbyJ.RossQuinlanin1993)usestheinformationgainratiotoselectfeatures;CARTalgorithm(Breimanetal.(proposedin1984)usetheGiniindexminimizationcriterionforfeatureselection.

Stavba náhodného lesa

Thedecisiontreeisequivalenttoamaster,classifyingnewdatathroughtheknowledgelearnedinthedataset.Butasthesayinggoes,oneZhugeLiangcan'tplaywiththreeheads.Randomforestisanalgorithmthathopestobuildmultipleheadsandhopesthatthefinalclassificationeffectcanexceedasinglemaster.

Howtobuildarandomforest?Therearetwoaspects:randomselectionofdata,andrandomselectionoffeaturestobeselected.

1. Náhodný výběr dat:

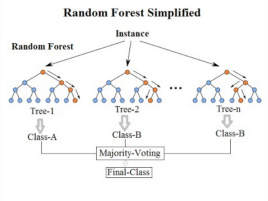

First,takeasamplewithreplacementfromtheoriginaldatasettoconstructasub-dataset.Thedatavolumeofthesub-datasetisthesameastheoriginaldata.Setthesame.Elementsindifferentsub-datasetscanberepeated,andelementsinthesamesub-datasetcanalsoberepeated.Second,usesub-datasetstoconstructsub-decisiontrees,putthisdataineachsub-decisiontree,andeachsub-decisiontreeoutputsaresult.Finally,ifthereisnewdatathatneedstobeclassifiedthroughtherandomforest,theoutputresultoftherandomforestcanbeobtainedbyvotingonthejudgmentresultsofthesub-decisiontree.AsshowninFigure3,assumingthatthereare3sub-decisiontreesintherandomforest,theclassificationresultof2sub-treesistypeA,andtheclassificationresultof1sub-treeistypeB,thentheclassificationresultoftherandomforestistypeA.

2.Náhodný výběr funkcí, které se mají vybrat

Similartorandomselectionofdatasets,eachsplittingprocessofthesubtreeintherandomforestdoesnotuseallthefeaturestobeselected,Butrandomlyselectacertainfeaturefromallthefeaturestobeselected,andthenselecttheoptimalfeaturefromtherandomlyselectedfeatures.Inthisway,thedecisiontreesintherandomforestcanbedifferentfromeachother,andthediversityofthesystemisimproved,therebyimprovingtheclassificationperformance.

InFigure4,thebluesquaresrepresentallthefeaturesthatcanbeselected,thatis,thefeaturestobeselected.Theyellowsquareisasplitfeature.Ontheleftisthefeatureselectionprocessofadecisiontree.Splittingiscompletedbyselectingtheoptimalsplitfeaturefromthefeaturestobeselected(don'tforgettheID3algorithm,C4.5algorithm,CARTalgorithm,etc.mentionedabove).Ontherightisthefeatureselectionprocessofasubtreeinarandomforest.